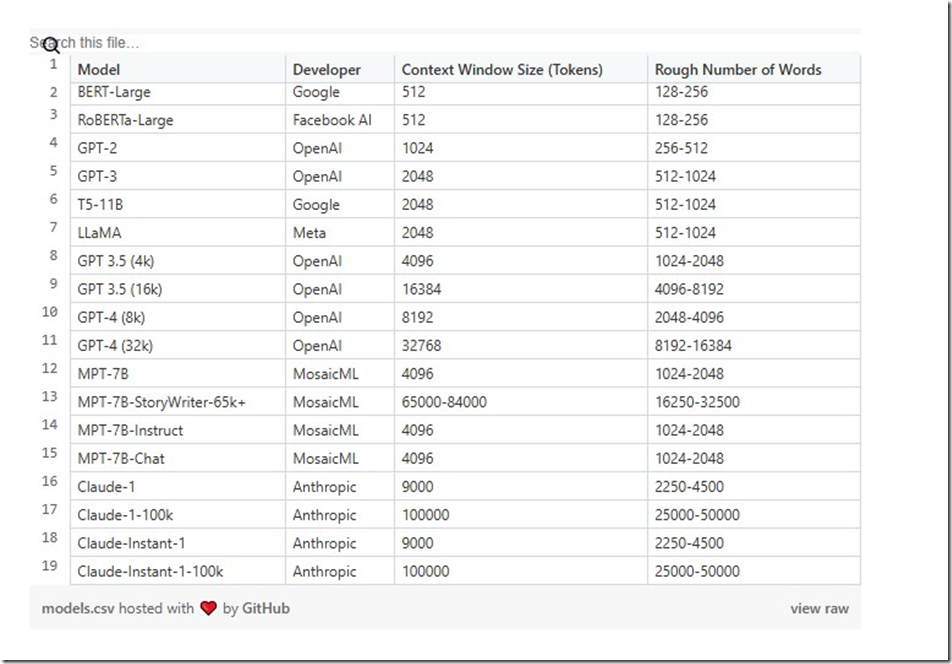

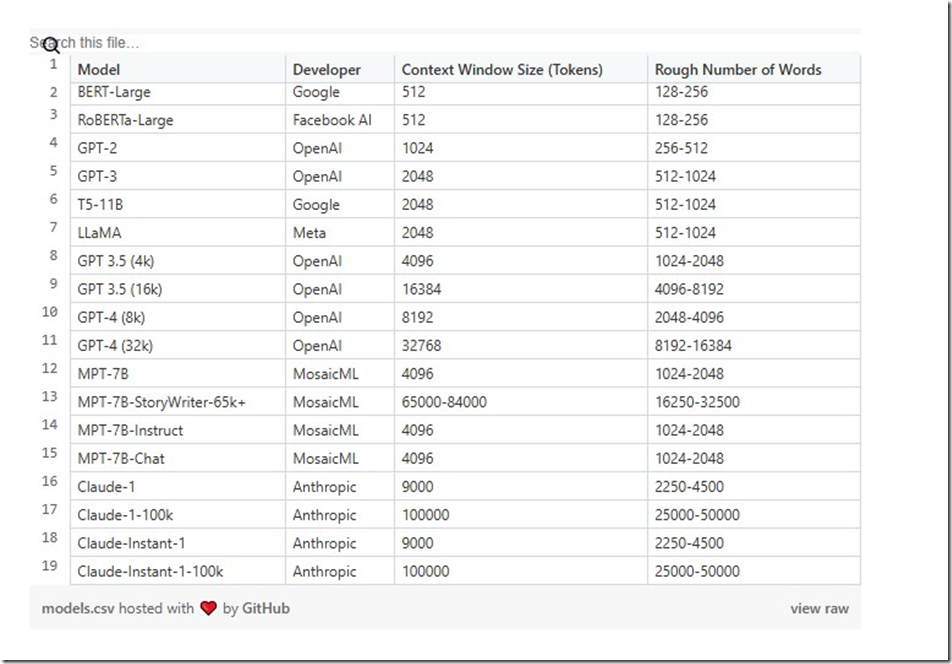

https://gist.githubusercontent.com/seandearnaley/953ae9945676488cf6f1a03cbb1db63d/raw/a13cae3c7b940b08db9ab92c1f7c6cea6d911350/models.csv

Model,Developer,Context Window Size (Tokens),Rough Number of Words

BERT-Large,Google,512,128-256

RoBERTa-Large,Facebook AI,512,128-256

GPT-2,OpenAI,1024,256-512

GPT-3,OpenAI,2048,512-1024

T5-11B,Google,2048,512-1024

LLaMA,Meta,2048,512-1024

GPT 3.5 (4k),OpenAI,4096,1024-2048

GPT 3.5 (16k),OpenAI,16384,4096-8192

GPT-4 (8k),OpenAI,8192,2048-4096

GPT-4 (32k),OpenAI,32768,8192-16384

MPT-7B,MosaicML,4096,1024-2048

MPT-7B-StoryWriter-65k+,MosaicML,65000-84000,16250-32500

MPT-7B-Instruct,MosaicML,4096,1024-2048

MPT-7B-Chat,MosaicML,4096,1024-2048

Claude-1,Anthropic,9000,2250-4500

Claude-1-100k,Anthropic,100000,25000-50000

Claude-Instant-1,Anthropic,9000,2250-4500

Claude-Instant-1-100k,Anthropic,100000,25000-50000